Standard RAG vs Agentic RAG for Customer-Facing Chatbots: Which Does Your Chatbot Need?

Your standard RAG chatbot answered FAQs perfectly for three months. Then something shifted.

Customers started asking multi-part questions. Your support bot started retrieving the right documents but generating the wrong answers. Your CRM still needed manual updates after every chat session. The bot could tell a customer their invoice total, but it couldn’t check it, flag the anomaly, cross-reference the discount tier, and open a support ticket in one continuous flow.

That’s not a prompt engineering problem. That’s an architecture problem.

The conversational AI industry is going through a generational shift, and most businesses are stuck between two architectural generations without a clear framework for deciding which one they actually need.

Gen 1 chatbots followed rigid decision trees.

Gen 2 introduced Retrieval-Augmented Generation (RAG) — grounding an LLM’s answers in your actual company data to cut hallucination rates.

Gen 3 is where the agent loop (observe → plan → act → reflect) lets the system resolve the customer’s goal, not just their question.

Most businesses deployed Gen 2 and called it done. The problem is that Gen 2 has a hard ceiling. And when your business hits it, the symptoms look like poor bot performance, but the cause is architectural.

Not sure yet whether to build a custom AI system or use an off-the-shelf bot? Read our analysis of Custom AI Development vs. Off-the-Shelf Bots: Which Delivers Real ROI? before evaluating architecture, the build decision comes before the architecture decision.

What Standard RAG Actually Does (and Where It Stops)

The standard RAG pipeline is linear and single-pass:

User Query → Embedding Model → Vector Database Lookup → Top-K Chunk Retrieval → LLM Answer Generation → Response

One shot. The system embeds your query, searches a vector database (Pinecone, Weaviate, ChromaDB) for semantically similar chunks, pulls the top-k results, feeds them into the LLM’s context window, and generates a response. If the retrieval surfaces the right document, the answer is good. If it doesn’t, there is no retry, no self-correction, no second attempt.

For the right use cases, this is genuinely sufficient. Single-document lookups like “What are the terms of plan Y?” or “What does this configuration option do?” are handled cleanly. The pipeline is fast, cost-predictable, and easy to debug.

But three specific failure modes signal you’ve hit the ceiling:

Single-source retrieval failure: the wrong document is retrieved, the LLM generates a plausible-sounding answer, and there is no mechanism to catch the error before it reaches the user. Hallucination climbs not because the LLM is guessing, but because the retrieval step failed it.

Multi-part query collapse: a customer asks: “Check my last invoice, apply the loyalty discount, and confirm whether my total matches what was quoted in my contract.” Standard RAG collapses this into a single context window with a single retrieval pass. The result is a partial, incoherent answer that forces the customer to repeat themselves or escalate.

No action capability: the bot answers, but it cannot act. It cannot update a CRM field, book an appointment, escalate a ticket, or write back to any external system. For many businesses, this gap causes the highest measurable revenue leakage.

What Agentic RAG Actually Changes?

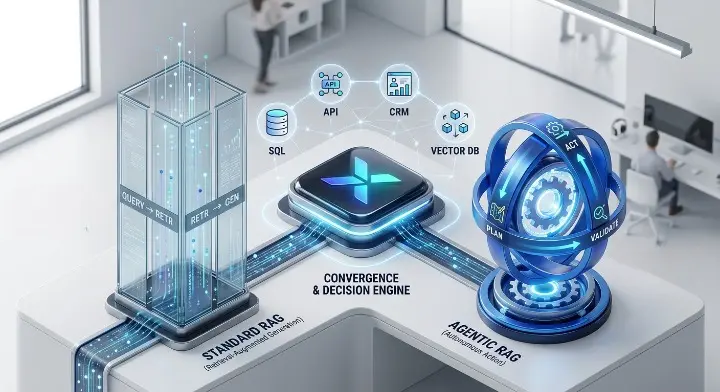

Agentic RAG is not a different product; it is RAG with a control loop added on top.

The core structural shift: instead of one linear pass, the system runs a

Retrieve → Reason → Decide

loop that repeats until a stop condition is met. Stop conditions include a high-confidence answer with cited sources, maximum step count reached, token budget cap hit, or escalation trigger fired. The loop is bounded and deterministic.

Four agent types power the capabilities:

Query Planning Agent: Before retrieval begins, this agent clarifies vague queries, expands them with related terms, segments complex multi-part queries into focused sub-queries, and injects session context. “Sort out my billing issue” becomes three precise sub-queries before a single vector database call is made.

Routing Agent: Determines which knowledge sources and external tools address each sub-query: vector stores, SQL databases, live APIs, calculators, CRM systems. Rather than querying one vector database, the system routes each sub-query to the most appropriate data source.

Validation Agent: Once documents are retrieved, this agent applies consistency checks, source verification, and confidence scoring before the retrieved content informs generation. This is the layer that catches retrieval failures before they become hallucinations.

Reasoning Agent: Performs higher-order synthesis across retrieved chunks: ranking evidence by relevance, clustering contradictory information, and resolving conflicts between multiple source documents.

The ReAct Loop in practice:

Query → Plan → Route → Retrieve → Validate → Reason → Answer

(or loop back to Plan with a corrected sub-query). This architecture enables tool calling through APIs, the bot stops being a read-only responder and becomes a system that can take verified, auditable actions.

The honest tradeoff:

Attribute | Standard RAG | Agentic RAG |

Retrieval passes | 1 (fixed) | Iterative (until stop condition) |

Self-correction | No | Yes |

CRM / API action capability | No | Yes |

Multi-source routing | No | Yes |

Latency | Low, predictable (<2s) | Higher, variable (3–8s) |

Token cost per query | Low (single LLM call) | Significantly higher (multi-step) |

Debugging complexity | Linear and transparent | Control-related failures (loops, cascades) |

The token cost gap is real and often underestimated. That table is not a reason to avoid Agentic RAG; it is a reason to run the five-stage framework below before committing to it.

The 5-Stage Decision Framework

Run your current chatbot through each stage in order. Each stage produces a binary signal: Standard RAG is sufficient, or an architectural upgrade is triggered. If you reach Stage 5 with zero triggers, standard RAG is still the correct choice; forcing agentic complexity on top of it will increase operational cost without improving outcomes. Two or more triggers mean the cost of not upgrading now exceeds the cost of building the loop.

Stage 1: Query Complexity: Single-Turn FAQ or Multi-Hop Reasoning?

Standard RAG handles single-hop queries cleanly: “What are your pricing plans?” “What’s your returns policy?” One retrieval, one answer.

Agentic RAG is triggered by multi-hop queries: “Check my last invoice, find the discount code that was applied, confirm it matches the rate in my contract, and if it doesn’t, raise a billing dispute ticket.” These require decomposition into sub-queries, routing across multiple data sources, synthesis of evidence, and action on the result. No amount of prompt engineering replicates this; the architecture itself is the constraint.

Decision Rule: Pull a random sample of 200 real queries from your conversation log. Classify each as single-hop or multi-hop. If more than 15% of your live query volume is multi-hop, you have crossed the Stage 1 threshold.

Stage 2: Retrieval Failure Rate: Is Your First Pass Reliable?

Most businesses attribute chatbot errors to the LLM. In practice, the retrieval step fails more often than the generation step.

Standard RAG is sufficient when your faithfulness score does the generated answer match the retrieved document? is consistently above 85% on real production queries.

Agentic RAG is triggered when single-pass retrieval demonstrably fails for a significant portion of queries. In a standard RAG system, if retrieval fails to find the right document, the LLM generates a poor answer with no recovery mechanism. In an Agentic RAG system, the validation agent evaluates retrieval quality, triggers a corrective search with a refined query, and withholds generation until the retrieved context meets a confidence threshold. This is the architectural mechanism that reduces hallucination rates from the industry average of 15–20% down to sub-2%.

Track three metrics on your production log: retrieval precision and recall, faithfulness score, and task completion rate.

Decision Rule: A faithfulness score below 85% on real production queries means your retrieval pipeline is failing, not your prompt. Adding a validation agent is the correct fix.

Stage 3: CRM and API Action Requirement: Does the Bot Need to Do or Just Answer?

This is the clearest architectural trigger and the one with the most direct revenue connection.

Standard RAG is sufficient when the chatbot’s entire function is to respond surface information, answer questions, direct users to resources. No write-backs needed.

Agentic RAG is triggered the moment the chatbot needs to act. Update a CRM contact record. Book an appointment against a live calendar. Check real-time inventory. Escalate a ticket with a populated summary. Process a partial refund. Any workflow requiring a write-back to an external system requires tool-use agents, which are architecturally Agentic RAG.

A customer support chatbot for a UK law firm, for example, cannot just answer questions about fee structures. It needs to screen for conflicts of interest, check the client database, and if cleared, create a client intake record in the case management system. None of that is possible in standard RAG. All of it is achievable with a properly scoped Agentic RAG system with tool-use agents and human-in-the-loop escalation rules.

Decision Rule: If any single workflow in your chatbot’s scope requires a write-back to any external system, CRM, booking platform, ticketing tool, or database, you need tool-use agents. That means Agentic RAG.

Stage 4: Latency Sensitivity: What Is Your Acceptable Response Time?

This is the most underreported tradeoff in every RAG comparison. The omission causes real production failures.

Standard RAG is sufficient when your use case requires consistent sub-2-second response times. Live website chat on a high-traffic product page has a real bounce-rate consequence if latency exceeds two seconds. Standard RAG single retrieval pass, single LLM call is architecturally suited to that profile.

Agentic RAG is appropriate when your use case can tolerate 3–8 second responses in exchange for significantly higher resolution quality: after-hours lead qualification, async support ticket triage, back-office CRM updates, complex service booking flows where users expect brief processing time.

The most cost-effective production pattern at scale is a lightweight classifier agent sitting in front of both pipelines. It reads the incoming query, classifies it as simple (single-hop, informational) or complex (multi-hop, action-required), and routes accordingly. Simple queries go to the standard RAG for sub-2-second resolution. Complex queries go to the agentic loop for thorough, action-capable resolution. This is how enterprise-scale systems manage cost and quality without sacrificing user experience.

Decision Rule: Map your actual query distribution against your latency SLA. If 70% or more of your queries are simple lookups, the hybrid routing layer is the right architecture not a full agentic migration for everything.

Stage 5: Compliance and Auditability: Do You Need a Traceable Decision Trail?

This stage sometimes reverses decisions made in previous stages. It is the most important consideration for regulated industries.

Standard RAG with Corrective RAG (CRAG) is preferred when you operate in healthcare, legal services, or financial services where every AI-generated response must trace back to a specific, identifiable source document. The audit trail must be linear and reproducible. Under UK GDPR Article 22, customers retain the right to contest decisions made through automated processing. Under ICO guidelines, your system must provide “meaningful information about the logic involved” in any AI-assisted decision. A standard RAG pipeline provides this naturally.

Agentic RAG with compliance guardrails is required when you need both action capability and a regulated operating context. Every agent decision must be logged with its source document, confidence score, tool call executed, and resulting action. Every escalation trigger must be documented. Human-in-the-loop handoff workflows must be hardcoded, not optional. This is not a contradiction it requires building auditability as a first-class architectural requirement from day one, not retrofitting it later.

Decision Rule: In a regulated industry, you don’t avoid Agentic RAG because of compliance requirements. You build it with compliance as a design constraint.

Your 5-Stage Decision Scorecard

Stage | Trigger Condition |

1. Query Complexity | >15% multi-hop queries in live log |

2. Retrieval Failure Rate | <85% faithfulness score on production queries |

3. CRM / API Action | Any workflow requires external write-back |

4. Latency Sensitivity | Complex queries tolerate 3–8s responses |

5. Compliance / Auditability | Regulated industry with action requirements |

Score 0–1: Standard RAG is the correct choice. Optimise your retrieval pipeline first by improving the chunking strategy, tuning your embedding model, and reviewing your top-k parameter. Architecture is not your problem yet.

Score 2–3: Hybrid Architecture. Add a query routing layer, implement Corrective RAG as an intermediate step, and build tool-use agents only for the specific workflows that require CRM write-backs. Do not migrate your entire chatbot to the agentic loop.

Score 4–5: Full Agentic RAG with governance layer. The cost of staying on standard RAG in lost leads, manual CRM work, resolution failures, and compliance exposure now exceeds the build cost of the agent loop.

The Hidden Costs You Should Consider

Token cost multiplier: Agentic RAG runs multiple LLM calls per query. At 5,000 queries per month, standard RAG at ~£0.003 per query equals £15/month. Agentic RAG with four agent calls at ~£0.012 per query equals £60/month. At 50,000 queries, that gap is £150 versus £600. The upgrade is worth it when the resolution improvement converts enough leads or eliminates enough manual CRM hours to justify the delta.

Context bloat: The agent loop accumulates retrieved content across iterations until the prompt grows so large that LLM output quality degrades. Solved by hard context window limits in your stop conditions and active pruning of low-relevance chunks at the validation layer.

Tool-call cascade risk: One tool call triggers a dependent tool call, which triggers another, compounding latency and cost in an unbounded chain. Solved by maximum step count limits, typically 5–7 steps before mandatory human escalation is enforced at the orchestration layer.

Observability overhead: You cannot debug an agentic system without a tracing layer. LangSmith, Arize Phoenix, or custom structured logging are not optional extras; they are foundational requirements. Every agent decision, every tool call, every retrieval pass must be captured with a timestamp, confidence score, and outcome. Budget for this from day one.

The Recommended Migration Path

Phase 1: Audit your current chatbot (2–3 weeks). Pull 200 real production queries. Classify by complexity. Measure faithfulness score and retrieval precision. Identify CRM write-back requirements. Map latency distribution. This audit gives you the objective data to scope the migration and prioritise which agent capabilities to build first.

Phase 2: Add Corrective RAG before full agentic migration (3–6 weeks). CRAG is the architectural bridge between standard RAG and full Agentic RAG. It adds self-evaluation and corrective retrieval to your existing pipeline without the full agent loop overhead. When the validation step detects low-confidence retrieval, CRAG triggers a second, more targeted search before generation, typically recovering 5–15 percentage points of faithfulness score.

Phase 3: Build agentic capability incrementally (ongoing). Start with CRM read-only tool access. Validate that query planning and routing agents operate correctly with a single external system before expanding. Add write-back capability once read operations are stable. Then, multi-source routing. Then, the full multi-agent loop with stop conditions, observability, and compliance logging in place.

The Architecture Decision Is a Business Decision

Automation helps, but without structured controls, it can spread errors faster. For example, a bot updating booking slots incorrectly can lead to overbooking or wrong confirmations.

That’s why combining AI business process automation with controlled chatbot logic is essential. It ensures the bot:

- Uses the right data

- Follows business rules

- Escalates when necessary

This combination boosts efficiency without risking customer trust.

Conclusion

The right question is never “which architecture is more technically advanced?” It’s: “What does my business lose every day it stays on the wrong one?”

If your chatbot can answer but not act, it is leaving CRM updates unprocessed, lead qualifications incomplete, and service resolutions half-finished every single day. The manual work to fill those gaps has a measurable cost. So does the lead leakage when a high-intent customer asks a complex question after hours and gets a vague, partial answer instead of a qualified response and a booked appointment.

Agentic RAG closes that operational gap. But only when your actual query complexity, retrieval failure rate, CRM action requirements, latency tolerance, and compliance context justify the investment.

Run the five-stage framework against your real production data. The score tells you where you are. The migration path tells you what to do next.

Conclusion

AI chatbots can transform customer support for UK service businesses, but hallucinations, wrong answers, inconsistent responses, or outdated information undermine trust and efficiency. Controlling how your chatbot accesses and uses data is essential to prevent these errors. Using RAG systems, structured knowledge bases, and proper escalation rules ensures your chatbot only answers what it truly knows.

At Xtreeme Tech, we combine custom chatbot development with AI business process automation to build systems that are accurate, reliable, and integrated with your workflows. Our chatbots deliver consistent answers across platforms, automate repetitive tasks, and reduce workload, all while keeping the human touch intact.

Choosing the right chatbot is not just about automation; it’s about trust. A well-designed, controlled AI system minimizes hallucinations, improves customer experience, and allows your team to focus on strategic priorities. If you want a chatbot that works correctly and consistently, Xtreeme Tech provides the solution your business can rely on.